It isn’t often that a social media startup has the stated “ambition to become a major social platform and a leader in safety [emphasis mine].” But that’s what the team behind Yellow, a fast-growing, Paris-based videochat app that just launched in the U.S., says, and I believe them.

Details about safety measures in a minute, but first: Intention alone is huge in an industry where startups and safety seem to be on different planets, right? If nothing else, consider how crazy it would be for a social media company to state that aim publicly and in this climate (of heightened concerns about teens in digital media) if they weren’t serious. And what a great way to be noticed, too – can you see what I mean? I think we’re at the start of a new trend: safety, not orange, being “the new black” for social startups. If you doubt that, consider how short-lived the Color, Secret and Yik Yak apps were. We’re not there yet, but this is another sign.

Details about safety measures in a minute, but first: Intention alone is huge in an industry where startups and safety seem to be on different planets, right? If nothing else, consider how crazy it would be for a social media company to state that aim publicly and in this climate (of heightened concerns about teens in digital media) if they weren’t serious. And what a great way to be noticed, too – can you see what I mean? I think we’re at the start of a new trend: safety, not orange, being “the new black” for social startups. If you doubt that, consider how short-lived the Color, Secret and Yik Yak apps were. We’re not there yet, but this is another sign.

What to look for in/behind a new app

Second, they engaged Annie Mullins, the former global head of youth safety policy for mobile carrier Vodafone who later, as an independent safety consultant, was key to the Latvia-based ASKfm social media app’s successful safety makeover (I can attest to that success as a member of that app’s Safety Advisory Board for nearly three years now). At a meeting with her and Yellow COO Marc-Antoine Durand in New York last week, I learned about the “engage and educate” approach Mullins is helping Yellow establish: communicating the community rules right at sign-up as well as when violations happen (like why someone gets a 24-hour time-out or why a profile photo, user name or the title of a chat has to be changed). This is educating users about safety as well as community rules as they go.

Third, the reality is, live-streamed videochat is here to stay, teens have shown they love it, there’s always some degree of risk (in social media as in life), and so teens need and deserve providers that aim to manage and teach them about risk. They deserve providers that require safe behavior, show users what that means, engage them in helping to keep their community safe and give them the tools to do so.

So at the meeting with Yellow last week, I heard about a lot more than intention. In fact, I will venture to say that, with the safety measures and features Yellow now has and is putting in place, this app is shaping up to be a leader in live-streamed video safety. Here’s why:

- Real faces only. Authenticity is a safety factor: Profile photos have to be users’ own faces – not their puppies, a cartoon avatar, or any other body part. Yellow uses image detection technology to detect non-faces, other people’s faces and non-photos – in real time. And if a person is reported, human moderators can tell if a photo was taken by the person’s phone or downloaded from somewhere else. But the app doesn’t wait for users to report violations and seems to be quite responsive to reports. So “moderation” actually means always-on tech + user reports + human moderators.

- The predation question: No one who signs up as 18+ can chat with users who sign up as 13-17. “It’s like minors and adults are using two different apps,” COO Durand told me. I checked. After I signed up with my actual age, the app’s “How old?” setting wouldn’t allow me to set the age range to under 18. People who sign up as under 18 can’t set their “How old?” setting to 18-99 (I guess 100-year-olds can lie about their age!). So when a blogger quoted a parental control company (now there’s a biased source) as saying “adult predators can … pretend to be minors,” that’s not true. Sure people lie about their age in social media, so a colleague of mine tested that. She was blocked immediately after she signed up as a teenager. [Certainly parents need to know that in social media as in offline life, kids can themselves seek out and communicate with creepy or criminal adults, but we do know from academic research that, in social media, the vast majority of teens just delete or block people they find creepy.]

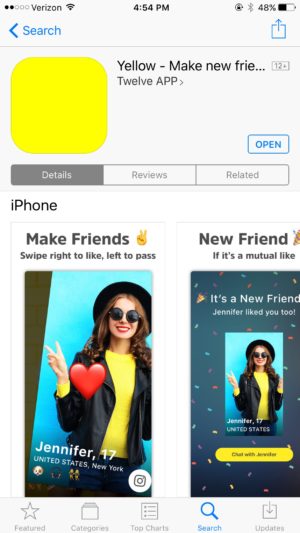

It’s not “Tinder for teens.” The swipe function that digital parenting bloggers keep associating with Tinder is actually going away in the next few months, Durand told me, and the emphasis (users’ favorite use) of the app is increasingly on “lives” – videochats.

It’s not “Tinder for teens.” The swipe function that digital parenting bloggers keep associating with Tinder is actually going away in the next few months, Durand told me, and the emphasis (users’ favorite use) of the app is increasingly on “lives” – videochats.

- “Nearby” is relative. Yellow users can’t chat with anyone closer than 18 miles, “80% of friends made on the app are 50+km away,” Yellow says; and 20% choose not to show the city they’re in. Not that it can’t happen, but the app is designed for chatting now, not planning in-person meetings in the future, and design is key; it constantly communicates the community’s aims.

- Violation tracking. Yellow keeps track of who’s been reported, timed out or blocked, so repeat violators can be banned permanently if need be, based on their phone’s unique ID info. A user’s phone identifiers are a source of accountability. Soon, Yellow says, they’ll start tracking age variations from a single phone ID.

- Easy reporting. The app makes it extremely easy to block or unfriend someone and report inappropriate content or behavior (touch the little flag in the upper left corner of the screen, then choose why you’re flagging it: “usage of drugs,” “underage,” “profile doesn’t represent a person,” “fake profile,” “nudity” or “other”).

- Coming soon: Yellow will give special reporting privileges to vetted “Trusted Users,” avid users who tend to have more knowledge of what’s happening “locally” (in-app) and can increase accuracy of reporting and moderators’ response.

- Parental backup: Yellow has a Safety Center on the Web with special forms for parents and law enforcement to report any content or behavior they’re concerned about (they don’t have to have a Yellow account to report). Yellow says their moderation team responds quickly, and all team members have been background-checked.

- So is Yellow safe? As safe as any social media can be. That is not a copout. That is the reality of our (much of the planet’s) new, very social media environment. Safety is, well, social in social media – just by the nature of our media, it’s sourced in the community’s users as well as its design and providers. In the case of users under 18, education – from fellow users (social norms), community features and of course parents and caregivers – is really important. Including us adults educating ourselves about how social media works and why its use is so individual and situational (surprisingly human rather than technical!).

So why is “engage and educate” such a good sign? It’s what a community of guided practice does. Five years ago I proposed “7 properties of safety in the digital age” and that’s one of them. Physical spaces, like households and classrooms, are now joined by digital communities of guided practice: ideally, safe places for kids to navigate social norms, do their developmental risk assessment, grow their resilience, and practice their digital, media and social literacy on the fly, as they interact. Let’s hope, right? Not all of them are, just like not every family, classroom or school is. But it’s worth noticing those that are consciously working on it. And rather than continue reflexively suspecting or dismissing each new media startup as we’ve done for over a decade now in an endless, fearful game of whack-a-mole, why not look for signs of good community building that engages users in practicing safety, literacy and citizenship? That’s what I see signs of in Yellow. Let’s stay tuned.

Sidebar for parents

Now with more than 10 million users, Yellow just officially launched in the U.S. For now but not for long, the free app is only available in the Apple App Store. There’s a prototype in Google Play, but the Yellow team is now creating a full version for Android phones, which of course will be in Google Play soon.

Parenting blogs and news coverage of new apps are usually negative and cautionary (if not just plain scary), especially when the apps are appealing to young users, and that’s the kind of coverage that has already started happening with Yellow. But I’m sure you know it’s not the only live videochat app available. There are others without the above safety features in place – such as House Party (see NBC News) and Monkey (see CNBC). And there’s always Facetime, Google hangouts, Xbox Live, etc. It probably works better to show a little honest curiosity about your kids’ and their friends’ favorite digital tools for hanging out than to micro-focus on their use of a single app. Or not. It depends on them, their friends and how things are going at home, school, etc., right? Because what we learned from our work on a national Internet safety task force at Harvard in 2008 was that what’s going on in a child’s head, home and school are better predictors of online risk than any technology a child uses.

Related links

-

UPDATE! As of fall 2017, Yellow got a new name – Yubo (details here) – and published their Parents Guide and Teens Guide.

- Very recent social media stats from BusinessInsider.com, which calls apps like Yellow “digital hangouts”

- “How and why teenagers use videochat,” a conference paper by researchers, and 2012 data on “teens and online video” from the Pew Research Center

- About how agency – kids’ ability to act and make change – is part of the safety equation too (not just the citizenship one): “Digital citizenship’s missing piece”

- “What’s (importantly) different about Snapchat” (back in 2014)

- “The 7 properties of safety in a digital age”

Disclosure: As a nonprofit executive, I’ve advised social media companies (not including Yellow) on youth online safety for a number of years. The ideas expressed, here—informed by that work, as well as 20+ years of writing about youth and digital media—are entirely my own.

[…] Anne Collier’s words, it’s important to build a digital community of “guided practice” — one where […]