Below you’ll find background and context to this pivotal point for social media. For a more prescriptive view, click to “Social media’s turning point: 5 steps needed now, no turning back.”

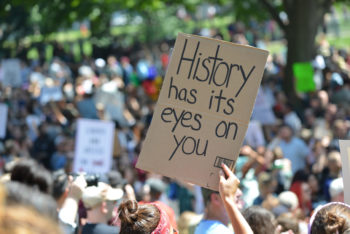

Suddenly things that looked like important, almost radical (potential) fixes a few months ago now seem like “band-aids” or symptom treatments. Suddenly we need a great deal more. By “we,” I mean us, our societies, our planet.

An example is the Oversight Board that Facebook started. Now its own entity with its first 20 members, it’s only Facebook’s brainchild, not its baby. It’s about appealing content decisions on just one (admittedly planet-spanning) social media platform. When I first heard and wrote about it, I saw it as an important social experiment. Now, “only” talking about content decisions – and that’s a massive topic – it’s far from enough to make real headway into fixing platform abuse and its ensuing social problems.

It’s literally academic, in terms of most of the Board’s makeup and its approach (with no intention to address content moderation emergencies). Legal scholarship dominates the Board so far. I get it. You absolutely need legal scholars’ input in decisions aimed at finding the right balance between protecting people and protecting freedom of speech. But now that seems like rearranging deck chairs on the sinking ship of democratic values.

It’s literally academic, in terms of most of the Board’s makeup and its approach (with no intention to address content moderation emergencies). Legal scholarship dominates the Board so far. I get it. You absolutely need legal scholars’ input in decisions aimed at finding the right balance between protecting people and protecting freedom of speech. But now that seems like rearranging deck chairs on the sinking ship of democratic values.

We’re told there has been discussion about other platforms participating. That would be absolutely baseline now. It has to happen. The platforms have to be all in – at the very least all the global ones (at least Facebook, Reddit, Snapchat, TikTok, Twitter and YouTube) – on the discussion about how social media can support social justice and democratic principles survive amid this global rise in populism.

Unplanned obsolescence

Before they figured out how to monetize their services, they were designed to be platforms-of-the-people, and they mirror the full spectrum of humanity, from people with either evil or purely self-serving intentions to regular people like you and me to the Dalai Lama. [In other words, and in short, so much good happens in social media, but it rarely cuts through our brains’ negativity bias in a dark time and it rarely makes the news because the definition of “news” is the exception to the rule, not the rule. And besides, this post is about this moment’s awful, riveting exception.]

In a piece he just posted on Medium.com, former Facebook and Pinterest executive Barry Schnitt made an important distinction that explained a lot. He wrote that Facebook’s system was optimized for the quality of connection rather than the quality of content (quality connections as in people you care about). But that design decision was made at a very different time, when social media really was just social and people got their information from traditional news sources. Over time, people started getting all their information (including the news) on Facebook too. But, as Schnitt writes, Facebook wasn’t optimized for quality information, and so you get information filtered through the dusty, sometimes downright dark, lenses of the likeminded people of your social circles – often the most clicked, sensational or sensationalist content – because optimized for connection. So what we’ve arrived at is an overwhelming 24/7 blend of that and solid information sources, where “overwhelming” has made it harder and harder to discern signal amid all the noise.

So even while social media platforms were turning into “information” services, figuring out monetization and otherwise becoming what they are now, they were also inadvertently and inexorably affecting the public discourse, providing those who would bait, outrage, sensationalize or otherwise manipulate for power or money an especially effective tool. “Social” media came to include its original meaning plus a societal one. Contrary to the original intentions for an unprecedentedly grassroots and peer-to-peer medium – the one people pictured during the Arab Spring – it was now affecting political systems worldwide (see Peter Pomerantsev’s This Is Not Propaganda for multiple examples).

The ultimate conundrum

I’m not saying social media is the cause of societal problems; if you’ve read much in NetFamilyNews, you’d know that. I’m saying it’s a factor. Long before media became social America had “a democracy problem,” as Yascha Mounk, author of The People vs. Democracy, put it. The exposure part of what social media represents – the way that, as a decentralized communications tool, it can bring evil into the light of day – is good, is necessary. That’s part of what the platforms refer to when they talk about “newsworthiness” when people in power spread misinformation. But sheer scale (user numbers), clickbait (sensationalism + curiosity or anger) and human beings’ negativity bias put tremendous weight on the negative side of the scale. Suddenly, “free speech,” or what people who don’t care about ethics and civil discourse call “free speech,” becomes a weapon, and social media is, confoundingly, a source of both despair and comfort at the same time.

What do we do with this? Honestly, I don’t know. I don’t think any single individual can figure this out, and I’m suspicious of anyone who says they do know. Even an Oversight Board of brilliant scholars, journalists and human rights activists charged with reviewing appealed content decisions within 90 days can’t know – for the very reason that it’s independent.

No other way

The platforms must be involved in figuring this out, and not just because they helped get us here. Because nobody has more expertise than the platforms in just how difficult it is to make emergency decisions about content, in particular from heads of state – and how to make them with integrity, with the aim to minimize harm to people, free speech and societies. I continue to believe they want to; we have seen them work together on other social problems.

Decisions by Twitter and Snapchat this past week are a sign that there’s a will, and that extremely hard decisions to break through this conundrum are possible. But they’re still acting as individual companies.

The next very difficult step is for them not just to make decisions about content together, but to make decisions about how to make social media an institution for the social good in societies worldwide. With all-industry buy-in. Figuring out how to keep what’s good and remove what’s damaging to whole societies will take leadership as an industry, multiple forms of expertise within industry and with other stakeholder groups, as well as the collective will not to stop until answers have been found and put in process. No lip service. Can you see any other way?

Urgent humility

It’s time. Harvard scholar Sheila Jasanoff describes this time and what’s most needed for it as “the technologies of humility“:

For every new technology, we must leave ourselves time to ask how it can best serve humankind. We will find the answers only by remaining critical and by supplementing the forces of government, the market, and ethics with a more humble approach to innovation.

It’s past time, actually. So much is at stake.

Related links

- Barry Schnitt’s piece “How Facebook Can Fix Itself” in Medium.com

- In Psychology Today, “Is social media destroying democracy?” Dr. Po Chi Wu at Hong Kong University of Science & Technology writes about how authoritarian powers cannot tolerate the decentralized nature of social media, but they have also learned how to weaponize its social optimization model.

- About the change in social media: “It would be absurd to blame technology for a phenomenon that has much deeper political roots. But while the global challenge to democracy from within isn’t social media’s fault, the major platforms do seem to be making this crisis worse,” writes reporter Zack Beauchamp in Vox.com. “The University of Oxford’s Samantha Bradshaw and Philip Howard put out a report last year on the political abuse of social media platforms in 48 countries…. ‘There is mounting evidence that social media are being used to manipulate and deceive the voting public – and to undermine democracies and degrade public life,’ they write. “Social media have gone from being the natural infrastructure for sharing collective grievances and coordinating civic engagement, to being a computational tool for social control, manipulated by canny political consultants, and available to politicians in democracies and dictatorships alike’.”

- “Facebook’s new Oversight Board isn’t operational yet and won’t review Trump’s ‘shooting starts’ posts” at CNBC

- In a timely example of how social media is used for social good, TBS talk show host Conan O’Brien talking with CNN’s Van Jones, CEO of the NGO Reform Alliance about Jones’s experience of the protests and public discussion around George Floyd’s murder by police offers in Minneapolis last week (and O’Brien’s intro to that conversation)

- In “America Is Not a Democracy,” author Yascha Mounk wrote in The Atlantic that, actually, “the rise of digital media…has given ordinary Americans, especially younger ones, an instinctive feel for direct democracy. Whether they’re stuffing the electronic ballot boxes of The Voice and Dancing with the Stars, liking a post on Facebook, or upvoting a comment on Reddit, they are seeing what it looks like when their vote makes an immediate difference. Compared with these digital plebiscites, the work of the United States government seems sluggish, outmoded, and shockingly unresponsive. As a result, average voters feel more alienated from traditional political institutions than perhaps ever before. When they look at decisions made by politicians, they don’t see their preferences reflected in them.” Check out the article to find out the results of a 2014 academic study on “the subversion of the people’s preferences in our supposedly democratic system.” The article is adapted from Mounk’s 2018 book The People vs. Democracy.

- A review of 4 books on “democracy in crisis” (including Yascha Mounk’s), by Shany Mor, associate fellow at Bard College’s Hannah Arendt Center, in Tablet

- Two key books on the complexity of content moderation: Speech Police: The Global Struggled to Govern the Internet, by David Kaye, UN special rapporteur on free expression and law professor at University of California, Irvine, and Custodians of the Internet: Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media, by Tarleton Gillespie, principal researcher at Microsoft Research New England

- “The Big Tech ‘fix’: Not either-or but a bit of both +,” my piece in Medium.com a year ago looking at the anti-trust discussion (see the part at the end about the “technologies of humility,” thanks to Harvard scholar Sheila Jasanoff)

Disclosure: I serve on the Trust & Safety advisories of Facebook, Snapchat, Twitter, Yubo and YouTube, and the nonprofit organization I founded and run, The Net Safety Collaborative, has received funding from some of these companies.

[…] week I wrote here about this pivotal point our new, very social, media environment has reached, and then a more […]