Among many other things, the past 24 hours were a pivotal moment for content moderation – for online safety worldwide. I’m usually not US-centric in the way I think about online safety, but what happened in Washington and online, yesterday and since, and then with the global platforms, showed us how far our thinking – and questions – about Internet safety have come.

Last night Facebook and Twitter locked President Trump’s accounts after he incited his supporters to storm the US Capitol, and today Facebook announced it would block Trump from its platforms “at least” until the end of his term. CEO Mark Zuckerberg said in his statement that “the risks of allowing the President to continue to use our service during this period are simply too great.”

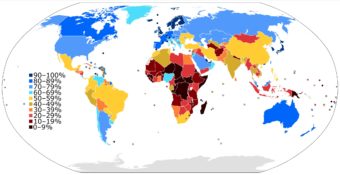

These events exposed how our thinking about online safety has changed – how we’re defining it, who and what needs protecting and who should be doing the protecting. It’s not Internet safety; it’s safety that includes the Internet. First, starting in the late 1990s, it was about children, then other vulnerable groups were added (when the Internet went social). Now online safety encompasses all of us; it includes questions of governance (of countries as well as of platforms); and it’s about protecting political systems and ways of life, not just citizens, even as the Internet is becoming the “splinternet.” Internet safety has spilled off the Internet and into every aspect of societies’ as well as of users’ and non-users’ everyday lives. Because the Internet has evolved into a global social institution – a problem, because it is governed by businesses.

An earlier pivot point came in 2019, when the CEO of Internet infrastructure company Cloudflare pulled the plug on 8chan. The latter hasn’t come up much in the online safety discussion, but it was the dark, unpoliced platform where the terrorists who committed that year’s mass shootings in Christchurch, New Zealand, San Diego, California, and El Paso, Texas, hung out. Cloudflare had a similar dilemma to Facebook’s and Twitter’s yesterday: censor, or “de-platform,” in the interest of people’s safety? I think they made the right decision, but many feel they are overstepping, acting like governments, and they have a point. I think the platforms need governments to decide – with the help of stakeholders representing many perspectives, including users of all ages. But what, or which type of, governments? Because the problem is, in the digital age, protecting people is so much more complicated than stopping online incitement of violence. And even “just” that is exceedingly complicated.

For example, here’s what Cloudflare CEO Matthew Prince told Internet policy analyst Ben Thompson about how he worked through his decision:

If this were a normal circumstance, we would say, ‘Yes, it’s really horrendous content, but we’re not in a position to decide what content is bad or not.’ But in this case, we saw repeated, consistent harm where you had three mass shootings that were directly inspired by and gave credit to [8chan]. You saw the platform not act on any of that and in fact promote it internally. So then what is the obligation that we have? While we think it’s really important that we are not the ones being the arbiter of what is good or bad, if at the end of the day content platforms aren’t taking any responsibility, or in some cases actively thwarting it, and we see that there is real harm that those platforms are doing, then maybe that is the time that we cut people off.

Governments had to deal with the horrific aftermaths of those terrorist acts, but can they figure out what to do to prevent them? And could governments in what we think of as free societies have affected the planning that happened on 8chan? Should/can such governments ban platforms that don’t moderate the content on them? Should the US’s CDA Sect. 230, which allows for content moderation, be repealed – or should the democratic governments of jurisdictions where platforms are based explicitly tell the platforms how to police the content on them, even if it’s of a political or religious nature? What about non-democratic governments? Can governments be impartial? Can platforms be, whether or not we trust them to be – and can they be informed enough about distant nation-states and cultures to make content decisions that do the least possible harm? Should inciting violence be the standard for content deletion? Can the breaking out of violence be predicted by either platforms or governments? Is it possible to ban all dangerous speech? Should governments write platform policy as well as guidelines for content moderators? [Adding this exactly two years later: On that last one, I think not, given that some governments are as autocratic, capricious and dismissive of the people’s interests as Twitter has become, but…]

No one is sure about the answers to those questions [we still aren’t, two years later]. But after yesterday, even journalists such as Ben Thompson and Casey Newton who are pro-free speech say the President should’ve been de-platformed. For inciting violence in the halls of Congress. Many of us are still shaken by this. So what do you think now? What and where is “online safety,” and who is responsible for it? We need to decide, and the decision makers must represent all stakeholder groups, not just industry and government – including those representing vulnerable groups who believe deplatforming after the fact is far from enough. We need to talk.

Related links

- “Talking with Kids about the Events at the Capitol in Washington, D.C.,” a conversation between two outstanding family therapists and authors, Dr. Laura Markham of AhaParenting.com and Susan Stiffelman on the latter’s Parenting Without Power Struggles podcast. There’s a transcript on that page, or read Susan’s newsletter that went out today, here.

- Too little, too late? Last September, award-winning writers Catherine Buni and Soraya Chemaly made the case in OneZero, as they had in other publications since 2014, that what we’re seeing from the platforms now is far from enough – that they’ve consistently failed to calculate and mitigate risk to people’s, not just Internet users’, safety. They led with a story of violence incited online in a place far from the US capital and by nongovernmental leadership: the ethnic cleansing of the Rohingya people of Myanmar, where Facebook was virtually the Internet. And that was just the lede, one example among many equally awful ones in many countries. It’d be understandable if people in other countries wonder why the platforms can’t act as quickly when violence is happening outside the United States (I’m thinking about my friends at the Center for Social Research in India). But coming back to the “far from enough” argument, we have two compelling arguments: are the platforms policing too little or should they have the power to police so much?

- The (media) reality we’re dealing with now: “We have handed over the power to set and enforce the boundaries of appropriate public speech to private companies,” Tarleton Gillespie, a principal researcher at Microsoft Research, wrote, in the Georgetown Law Technology Review in 2018. I agree with scholar Claire Wardle, who spoke at the first British parliamentary hearing held outside of the UK (in Washington, DC, in fact), that these are not just platforms or media companies and with journalist Anna Wiener that they’re now social institutions – global ones. What do we-the-people do with these new social institutions that are global corporations? [I wrote about Gillespie’s important 2018 book Custodians of the Internet (aka content moderators) here.]

- The all-important international part: “Meanwhile, the Taliban’s official spokesperson still has a Twitter account. As does India’s President Narendra Modi, even as his government cracks down on dissent and oversees nationalistic violence. The Philippines’ President Rodrigo Duterte’s Facebook account is alive and well, despite his having weaponized the platform against journalists and in his ‘war on drugs.’ The list could go on,” writes Evelyn Douek in The Atlantic (she is a Harvard Law School doctoral student and affiliate at the Berkman Klein Center for Internet & Society).

- One key stakeholder group for the discussion is content moderators. As of this year, they have a professional association of their own, TSPA, which I wrote about here.

- “It Wasn’t Strictly a Coup Attempt. But It’s Not Over, Either”: The New York Times reports that “these days, democracies tend to collapse from piecemeal backsliding that falls short of the technical definition of a coup, but is often ultimately more damaging.” (Jan. 7, 2021)

- A more prescriptive post I wrote in Medium last June, which seems so long ago. And what I suggested then (and I think holds up even more now) is that global platforms need the help of intermediaries – Internet helplines around the world, governmental or NGO, depending on the country – to decide in real time how best to prevent harm against people in their countries. Already existing in some countries (still mostly for minors’ safety), these intermediaries take different forms in different countries, from Netsafe in New Zealand to Child Focus in Belgium to hotlines in many countries that fold the Internet into their support for vulnerable adults. But what they can all provide is cultural intelligence – the “local” context the platforms need to help keep people safe worldwide.

- A song released in 2019 that predicted Jan. 6, 2020

- Clarity added later:

- “Does Deplatforming Actually Quell Hate Speech Online?” – 3 tech and communications scholars talk it in Undark.org (originally in The Conversation)

- “The treatment is removing exposure,” forensic psychiatrist Bandy X Lee told Scientific American. When asked “What attracts people to Trump? What is their animus or driving force?” Lee, who is also president of the World Mental Health Coalition, said, “The reasons are multiple and varied, but in my recent public-service book, Profile of a Nation, I have outlined two major emotional drives: narcissistic symbiosis and shared psychosis. It’s as if the decision makers at Facebook, Twitter and YouTube were acting on a “remove exposure” prescription.

- “This deplatforming not actually a precedent,” says Jillian York of the Electronic Frontier Foundation. The only “precedent” set here is that this is indeed the first time a sitting US president has been deplatformed by a tech company. I suppose that if your entire worldview is what happens in the United States, you might be surprised. But were you took outside that narrow lens, you would see that Facebook has booted off Lebanese politicians, Burmese generals, and even other right-wing US politicians…never mind the millions of others who have been booted by these platforms, often without cause, often while engaging in protected speech under any definition,” she writes in her blog.

- Deconstructing Trump’s Jan. 6 speech: an extraordinarily thorough thread of 200 tweets by attorney and professor Seth Abramson at University of New Hampshire on the 75-min. “speech now called an ‘incitement to insurrection'” that preceded the storming of the Capitol last Wednesday

- Coverage added later:

- “Facebook kicks Trump to the Oversight Board,” reports Casey Newton in Platformer.news, adding this is one of the Board’s first few cases but no decisions have been announced as yet. [See this from me about that body about when Facebook announced it two years ago.]

- Twitter, Trump’s main digital communications tool, banned his account altogether Jan. 8, citing risk of further violence, the Washington Post reported, later reporting on research backing up Twitter’s statement, finding “abundant evidence of threatening plans on a range of platforms large and small.

- Of other sources for insurrection communication: In a story similar to the Cloudflare one above, the New York Times reported Jan. 8 that “Parler Pitched Itself as Twitter Without Rules. Not anymore, Apple and Google said.” The two tech giants told Parler, which is a social network popular with far-right conservatives and was the “odds-on bet to be his next soapbox,” that it “must better police its users if it wants a place in their app stores.” Vox later ran a story about Amazon removing Parler from its cloud service and on how Parler could come back. Parler sued Amazon and the latter urged the court to let it keep Parler offline, the Seattle Times reports. Meanwhile, “on January 12, Telegram claimed that it had attracted 25 million new users in just 72 hours,” Wired reported.

- YouTube suspended Trump’s channel, CNN reported Jan. 13.

- Reddit banned subreddit The_Donald as early as June 2020, NPR reported back then.

- As for the “splinternet“:

- Uganda ordered all social media blocked in the run-up to its election, Reuters reported Jan. 12. Is it coming down to this: a world where either anti-democratic heads of state shut down the Internet or the Internet shuts down anti-democratic heads of state? Is there a third option? I don’t know; I’m asking. This is why all the stakeholders need to talk.

- “As Trump Clashes With Big Tech, China’s Censored Internet Takes His Side,” the New York times reported on Jan. 17: “Much of the condemnation [of the platforms] is being driven by China’s propaganda arms,” according to the Times. “By highlighting the decisions by Twitter and Facebook, they believe they are reinforcing their message to the Chinese people that nobody in the world truly enjoys freedom of speech. That gives the party greater moral authority to crack down on Chinese speech.”

[…] recent Internet-related dilemmas and developments: deplatforming heads of state and how meme culture gamifies reality (and longstanding […]