2nd important update Sept. 19: As if in direct response to the open letter from privacy activists around the world, the WeProtect Global Alliance published their own statement saying Apple must not pause its expanded protections, the Guardian reported. I stand by my thoughts in the update just below. We need privacy activists and protection activists in the same room answering the questions: Would Apple’s unpausing of its “expanded protections” do more than detect known CSAM and bring Apple up to speed with Microsoft, Facebook, Google and other tech giants without putting the privacy of human rights activists and others at risk? And crucially, would it help law enforcement find and rescue new victims of newly uploaded CSAM? [My commentary on this was just published at the Center for European Policy Analysis (CEPA).]

Update 1st week of September: Apple has hit the Pause button on the expanded child protections it announced last month, The Verge reported September 3. I think that was the right decision for two reasons: One is that, quite obviously, it needed to factor privacy in. Online safety doesn’t exist in a vacuum. It can’t be addressed without considering how safety and privacy affect each other. The other reason builds on that: The protections Apple announced were basically too little too late, especially given the privacy implications. They only bring Apple up to speed with other tech companies in fighting child sexual exploitation, a close look indicates. They’re about flagging known images already sitting in databases at the National Center for Missing and Exploited Children and the UK’s Internet Watch Foundation. I can’t see how they help law enforcement find new such images and rescue current victims as quickly as possible (see below for more on that).

What I posted Aug. 26: The August 2021 safety and privacy logjam is reminiscent of one in online safety’s earliest days, the late 1990s, when children were the only vulnerable class societies aimed to protect and I’m pretty sure before websites even had privacy policies. Here’s a NetFamilyNews analysis offering a bit of context historical and otherwise….

Apple has been the focus of considerable controversy since it announced “expanded protections for children” this month. More than 90 civil society organizations both national and international based all over the world published an open letter calling on Apple to abandon these planned protections, referring to them as “plans to build surveillance capabilities” into its devices. More on that argument in a moment; first a little historical context.

It’s like the late 1990s, when the clash between online child protection and citizens’ rights first surfaced – and confounded the US’s highest courts for years. For example, after (all but Sect. 230 of) the Communications Decency Act was struck down by the Supreme Court on First Amendment grounds in 1997, the next such legislative effort, the Child Online Protection Act, which Congress passed in 1998, went through three rounds of litigation involving both the Supreme Court and the Third Circuit Court of Appeals before the Supreme Court finally refused in 2009 to hear appeals, effectively shutting COPA down. Back then the clash was with free speech rights. Now it’s child protection vs. privacy rights. Even as privacy activists are decrying Apple’s announcement, child protection activists are applauding it (see this by John Carr in the UK).

Apple not a joiner

Which shows how complicated the Internet – the media environment that changed so much, including the definition of “community” and standards that courts used – has made it to protect vulnerable people. Ironically, Apple has never been a joiner where international cross-sector work on child online safety is concerned. Unlike other tech giants, it never formed a safety advisory board and was conspicuously absent from national task forces and international forums on the subject. It did join the Technology Coalition, formed in 2006 to combat global trafficking in CSAM (child sexual abuse material), but it isn’t known for contributing technology to that cause; Microsoft, Google and Facebook are the Coalition members known for that. So it appears Apple didn’t check these latest child-protection innovations with anyone but champions of online child protection such as NCMEC (the National Center for Missing & Exploited Children), to which Internet companies are required by federal law to report CSAM.

But whether in a silo or not, Apple’s CSAM detection technology is innovative and will, if it goes forward, improve the company’s ability to address part of this egregious social problem. Which needs improvement. According to NCMEC’s 2020 report, Apple reported 265 images of CSAM last year, compared to the 20.3 million Facebook reported. Apple just wasn’t finding it. Do we want Apple to find it? That’s a hard question for privacy activists, I suspect. I say “part” of it, because this fix is only for detecting known child abuse images, not new such material and as yet undetected victimization.

Two valid positions

The arguments on the detection side of the debate are every bit as compelling as those of the privacy activists. Consider, for example, the eloquent appeal of Julie Cordua, CEO of Thorn, to find and rescue child victims faster through faster detection and reporting of this content that re-victimizes them every time it’s shared. Watch her 2019 TED Talk, now up to nearly 1.8 million views, to understand not only what this kind of ongoing victimization is like for the victim but also what it’ll take to “dismantle the communities normalizing child sexual abuse around the world today.” Thorn is a US-based nonprofit that builds technology, grows cross-sector collaboration and produces brilliant inter-generational education “to defend children from sexual abuse.”

To the privacy activists, however, Apple has crossed a dangerous line. Technology analyst Ben Thompson wrote in his piece “Apple’s Mistake” August 9 that “one of the most powerful arguments in Apple’s favor in the 2016 San Bernardino case [where Apple refused to help the FBI unlock an iPhone, was] that the company didn’t even have the means to break into the iPhone in question, and that to build the capability would open the company up to a multitude of requests that were far less pressing in nature and weaken the company’s ability to stand up to foreign governments. He went on to say that now Apple is building that capability. In his next newsletter (not on the Web), Thompson quotes Apple’s answer to the question, “Could governments force Apple to add non-CSAM images to the hash list?” as saying, “Apple will refuse any such demands.” Thompson wrote, “I absolutely believe Apple as far as the United States is concerned.” I do too. But there’s the rub, right? What about other governments? What if Apple somehow changed its mind?

So we have two wholly valid, even compelling positions. Like many people, I care deeply about both. There’s a tremendous need for innovation and advancement in both child protection and privacy protection. Is some sort of solution possible? Let’s zoom in a little more to see if one can be found….

Is the slope that slippery?

First, to privacy activists’ concerns, Apple says that, with this development, its software isn’t scanning all of people’s photos, either in the cloud as some companies do, or on Apple devices. The software that will sit on devices will only be able to “notice” problematic photos – photos already identified as CSAM which are duplicates of known CSAM in NCMEC’s database of such photos – not all photos, and not only one or two. Only if a certain number of them are detected are they flagged for human moderators’ analysis as they’re uploaded to iCloud, Apple says. If someone opts out of using iCloud, nothing gets flagged. And Apple’s software can’t “see” any new sexually explicit or nude images (of a child in a bathtub, for example) because they aren’t already hashed or identified as known CSAM.

As Apple describes it, then, a) there’s no scanning of people’s entire photo collections on corporate servers, b) only known CSAM is flagged before any human being has to see it and c) it’s only flagged when detected as part of a collection – when it crosses what Apple calls a certain threshold such as 30 photos, Apple software chief Craig Federighi told the Wall Street Journal. In its description of the technology, Apple writes that “the threshold is set to provide an extremely high level of accuracy and ensures less than a one in one trillion chance per year of incorrectly flagging a given account.” But users who feel their accounts have been deleted and reported in error can appeal.

In their open letter, privacy activists used the word “surveillance” in reference to this system but to their credit only applying it to users who “routinely upload the photos they take to iCloud.” I do think they’re overstating it to call this development image surveillance that users cannot opt out of – that would be true only if and only after some 30 CSAM photos had been detected in a user’s account.

A very different tool

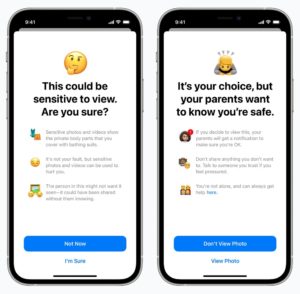

Also to privacy activists’ credit, their open letter led with a very different iPhone and iPad feature. I say to their credit because I’m biased – I feel they got this one right. I’ll come back to that in a moment but want to start by saying Apple would’ve been smart to announce it separately. It’s really a parental control tool, a forthcoming update on Apple devices for users of family iCloud accounts. Yes it involves detection of potential CSAM but its focus seems to be parenting not law enforcement. It’s about alerting parents to any sharing or receiving of intimate images happening on their children’s devices. For the child, a detected photo “will be blurred and the child will be warned, presented with helpful resources, and reassured it is okay if they do not want to view this photo,” Apple says. Unfortunately, it appears the child won’t be notified that the parent knows about the blurred photo unless the parent opts in to notifying the child. Full transparency should be the default.

What I feel the privacy activists got right is that, whether you use their term “surveillance” or the term “monitoring” to describe this update, it doesn’t represent safety for all children. Certainly when a child is at risk of sexual exploitation or physical harm, monitoring can be justified. But there are other situations where monitoring itself can be harmful, not just to trust in parent-child relations but also to children themselves.

“The system Apple has developed assumes that the ‘parent’ and ‘child’ accounts involved actually belong to an adult who is the parent of a child, and that those individuals have a healthy relationship. This may not always be the case; an abusive adult may be the organiser of the account, and the consequences of parental notification could threaten the child’s safety and wellbeing,” the privacy activists write. They give one example: “LGBTQ+ youths on family accounts with unsympathetic parents are particularly at risk.”

When will we collectively acknowledge that children are not all equally vulnerable online, nor are all parents equally protective? Privacy is safety for some children.

So where does this leave us?

This Apple story is important because it…

- Illustrates how hard it is to increase child safety without decreasing other rights – for children or for all Internet users.

- Demonstrates the challenge not only of honoring children’s participation rights to the same degree that we uphold their protection rights, as laid out in the Convention on the Rights of the Child, but also of upholding the protection rights of children who are vulnerable in different ways.

- Highlights how crucial it is for Internet companies to figure out how to get law enforcement information so young victims can be found and rescued, while also committing to protect the privacy of people around the world who could be victimized by law enforcement.

- Indicates the need for those representing different interest groups, such as vulnerable classes and political dissidents around the world, to think together on how to maximize safety and reduce harm for the groups they represent as well as others in need of protection.

- Points to how individual and contextual Internet safety is, which is what makes it so hard to get safety right even for a single vulnerable population such as minors, since not all children are equally at risk online (see this about enduring research findings).

We need to acknowledge the validity and challenges of differing interests to be able to maximize safety for all. Meanwhile, child safety is really gaining momentum. Now, in the year that the UN Committee for the Rights of the Child went official on children’s digital rights, that Australia’s eSafety Commissioner’s Office released their Safety by Design tools and that the UK begins enforcing its Age-Appropriate Design Code, Internet companies are making significant improvements for young users. It may not be bias-free for a British journalist to quote the online safety lead of a 137-year-old British charity in declaring that (British) “regulation works,” but regulation certainly doesn’t hurt in this case and may actually be helping (I still think Prof. Gillian Hadfield’s approach to digital age regulation is needed, as described here). In any case, Alex Hern’s column in The Guardian provides an excellent summary of the latest child safety improvements on YouTube, Instagram and TikTok.

Related links

- Apple on its “Expanded Protections for Children”

- The open letter to Apple CEO Tim Cook from civil liberties organizations around the world (at the website of the Center for Democracy and Technology, one of its signatories)

- Thorn’s statement on Apple’s August 5 announcement and an update on Covid’s impact on child sexual exploitation online in the form of this April 2921 TEDx talk by Sarah Gardner, Thorn’s VP of external affairs

- A statement on Apple’s plan from the Phoenix 11, a group of people who describe themselves as “survivors whose child sexual abuse was recorded and, in the majority of cases, distributed online”

- An interview Thorn CEO Julie Cordua and co-founder Ashton Kutcher gave for the podcast Sway in which Cordua tell Kara Swisher that this is “a first step” on Apple’s part, and she doesn’t know “if this is the ultimate solutions that is going to get us to a place where we’re detecting child sexual abuse material at scale in…an encrypted environment, but it…tells me that this company is willing to wade in to a really nuanced, difficult issue and say, ‘we believe we can do this’.” So she’s basically saying kudos to Apple for taking this step. What Cordua doesn’t speak to is whether this advances the needle at all in detecting new material and using it to rescue child sexual abuse victims as quickly as possible. I suspect not, because Apple’s technology only detects copies of known images already sitting in the databases of NCMEC and the IWF.

- A piece on Apple’s brand, not child, protection, as he describes it, by Edward Snowden

- In “Is Apple taking a dangerous step into the unknown?” Guardian tech editor Alex Hern quotes former Facebook chief security officer Alex Stamos as saying, “One of the basic problems with Apple’s approach is that they seem desperate to avoid building a real trust and safety function for their communications products…. There is no mechanism to report spam, death threats, hate speech […] or any other kinds of abuse on iMessage.”

- “Apple defends its new anti-child-abuse tech against privacy concerns: Apple’s radical new anti-abuse technology provoked both criticism and praise by scanning directly on iPhones,” reports MIT Technology Review

- The Wall Street Journal’s interview with Apple software chief Craig Federighi

- Tech reporter Casey Newton on Platformer: “Apple joins the child safety fight” and “The child safety debate intensifies”

- The latest on child safety regulation in Australia from InnovationAus

- A very early (2002) issue of Net Family News on the child protection vs. free speech debate and my “6 takeaways from 20 years in Net safety” (2017), Part 1 and Part 2

This is one of the best, most insightful and most balanced articles on children’s digital rights and safety that I’ve read in some time. Am sharing it widely. Our local UNICEF team was really impressed. Thank you

Thank you so much, Lesley. Your words mean a great deal – this is a challenging one, eh?