Susan Benesch, founder of The Dangerous Speech project, tells the story of how worried people in Kenya were in the run-up to their national election in 2013. Dangerous, inflammatory speech around the previous election in 2007 had led to widespread violence involving more than 1,000 deaths and hundreds of thousands of people being displaced, she said in a talk at Harvard’s Berkman Center, where she is a faculty associate.

But in 2013, Kenyan activists were able to counter the violent speech, especially on Twitter, which is extremely popular there. “When inflammatory speech was posted on Twitter, prominent Kenyan Twitter users (often members of the #KOT, Kenyans on Twitter, community) responded,” Benesch said, “often invoking the need to keep discourse in the country civil and productive.” It turned out to be a far more peaceful election than the 2007 one. What the #KOT peacemakers were tapping into in that very volatile situation was the power of social norms.

But in 2013, Kenyan activists were able to counter the violent speech, especially on Twitter, which is extremely popular there. “When inflammatory speech was posted on Twitter, prominent Kenyan Twitter users (often members of the #KOT, Kenyans on Twitter, community) responded,” Benesch said, “often invoking the need to keep discourse in the country civil and productive.” It turned out to be a far more peaceful election than the 2007 one. What the #KOT peacemakers were tapping into in that very volatile situation was the power of social norms.

Social norms’ key role

“People’s behavior shifts dramatically in response to community norms,” Benesch said in her talk, explaining that 80% of people are likely to conform their speech or behavior to the norms of their community – “even trolls,” she said. [For more on social norms, see this.] In one example she gives, a Kenyan Twitter user “posted that he would be okay with the disappearance of another ethnic group and was immediately called out by other Twitter users. Within a few minutes, he had tweeted, ‘Sorry, guys, what I said wasn’t right and I take it back’.”

Think about that in the context of young people’s online speech. Acts of kindness like that of this US high school student and these Canadian students) are demonstrations of counterspeech that not only increases students’ safety, online and offline, but also inspires and challenges their peers’ own creative civic engagement.

Net safety toolbox’s newest tool

So you might think of this as the “new kid on the block” of Internet safety – though it’s a safety tool for people of all ages. Counterspeech is not new in terms of social change, but we’re at the start of its being used, consciously, as a tool for addressing and turning around destructive online behavior. In other words, it’s a tool with enormous potential for bottom-up or peer-driven (P2P) safety and social change in both digital and physical environments – including school environments. And I hypothesize that it will be lasting because it isn’t limited to either online or offline spaces or any particular interest group, culture or nationality.

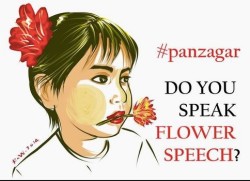

I can think of two other examples of powerful counterspeech, one in the US, the other in Myanmar. In the latter country, there’s a counterspeech movement called Panzagar, which translates to “Flower Speech,” and Facebook is its main platform because, as Benesch put it in a blog post, “Facebook so dominates online life in Myanmar that some of its users believe Facebook is the Internet, and have not heard of Google.”

Closely tied to a culture

Closely tied to a culture

Here’s what happened in that country: “Panzagar began by creating a meme”: an animé character with a flower in her mouth. “Taking a cue from this symbolic commitment not to use or tolerate speech that can ‘spread hate among people,’ as Nay Phone Latt [the movement’s leader, an Internet café owner, activist, and poet who was released from prison in 2012] put it.” He’d been sentenced to 20 years and imprisoned for reporting that could harm the government’s reputation. The meme was posted on Panzagar’s Facebook page and, within days, thousands of people ‘liked’ the page and “posted photographs of themselves holding flowers in their mouths … a courageous act in a country where anti-Muslim feeling is growing,” Benesch writes (does this sound familiar to Americans?).

Benesch gives other examples in her post (so check them out), but I know one she may not know about yet which enlists, empowers and recognizes’ students’ counterspeech: #iCANHELP. I wrote about that project here. Social norms are powerful in school culture just as they are in any other culture.

As for what what this tool counters, here are some characteristics of dangerous speech Benesch shared at Facebook’s Compassion Research Day early this year: dehumanizing or marginalizing individuals or groups, spreading false rumors (aka lying about them), defaming people, and characterizing people as threats (thus inciting violence). We need to think of all of this as a form of violence, I believe. It does violence to targets’ emotional and social well-being, and it incites further violence.

Constructive ways of responding to dangerous speech:

- Confrontation: “Many people, even those who post hateful speech don’t actually intend to hurt,” Benesch said at Facebook (it’s sometimes hard to tell if they’re just calling out hate speech they’ve witnessed); “some are passing on their own pain”; and many posters and tweeters are “not so thoroughly baked in their conviction.” She says it takes a small group of people to to express the hate, a much larger group to condone it. This is really important. When we witness hate speech, we can ask ourselves (and our children): “Do I condone that?” If not, here’s what I can do about it….

- Counterspeech: Like the #panzagar movement in Myanmar and the #KOT group in Kenya, we can post and tweet two kinds of counterspeech: the social norms kind and the kindness kind. The social norms kind is like the Kenyan activists’ tweets entreating a peaceful election season for all citizens’ sake; the other kind is obvious: a digital pile-on of kindness, compliments, respect – countering by swamping hate with its opposite.

- Bystander recruitment: Benesch said “we’re only just beginning to explore this,” but you can see how it would work: upstanders encouraging bystanders to join them in turning situations around.

- Platform features: Apps and services can foster social norms with their product features and community standards. This is early stages too, but I wrote about this in 2012 as one of my proposed “7 properties of safety in the digital age” – I called it “infrastructure,” and it’s not just an online infrastructure. Both digital and physical environments affect behaviors and social norms, whether they’re school climate and policy, classroom atmosphere or product features. So this is actually not entirely new to parents, educators and media companies, right?

- Private spaces vs. public spaces: This is about transparency. When speech and behavior are made more public, they get modified, right? This is also nothing new. What’s new to those of us who’ve known life without social media, is the collapsed boundaries between our various publics, the loss of compartmentalization – what we’d say within our family vs. what we’d say at work or in a public forum. Online tends to mash all those audiences or publics up, which affects what we say. In a way, this growing transparency increases civility – “keeps us honest,” is one way to think of it.

But what about the old “ignore the bully” or the newer “don’t feed the trolls” – the response so often recommended to children? Benesch seems to be suggesting that we feed the trolls, right?

First of all, what we’re talking about, here, is not bullying, per se. Dangerous speech can feel very personal, like bullying, but what we’re talking about, here, is largely online speech and seems to concern whole communities, if not societies. Certainly school is one form of community, but dangerous speech seems to be more at the society end of the social spectrum. Trolls can be like bullies, but the latter label typically refers to someone the target knows in offline life. The kind of cyberbullying that has the most harmful impact, research shows, is tied to offline life and mixed with the in-person kind of bullying.

Definitions still aren’t clear, but this is what I’m seeing, and Benesch avoids defining “trolls” altogether. She also says that “different ‘trolls’ have different motivations,” according to the blog post about her talk at Harvard. “Her goal is to move away from considering trolls as the problem and towards understanding dangerous speech as a broader phenomenon.” This sounds like top bullying expert Dorothy Espelage’s recommendation that we stop using the word “bullying” altogether because it’s such a loaded, un-useful term.

‘Killing the messenger’ a waste of time

Benesch said that we make some false assumptions about trolls: 1) “that if we ignore a troll, they will stop” (Zoe Quinn, a victim of severe, sustained online harassment, explained in a talk this year why “‘don’t feed the trolls’ is garbage advice”); 2) “that online hate is created by trolls” (in the few experiments that look at racist and sexist speech, at least half is produced by those who don’t fit that label); 3) “that all trolls have the same motivations and that they will respond to the same controls” (based on her talk, Quinn agrees); and 4) “that the trolls are the problem,” when it’s the effects of the dangerous speech on the audience that is the problem.

Clearly we have a lot to learn about this new safety tool so that we can make it more effective. We need to get on with this! For too long, politicians reacting to social unrest involving the Internet – such as the Paris riots of 2005 and the London riots of 2011 – have blamed the Internet or connected devices for the unrest with a “kill the messenger” sort of logic. Connectivity isn’t causative, but it can speed up people’s communicating and organizing, as well as speech that’s either hateful or healing. The Internet also provides us with an unprecedented advantage, Benesch suggests: It allows us to see and measure the effects of both the dangerous speech and the counterspeech. And it allows people like the activists of #KOT in Kenya, #Panzagar in Myanmar and student bodies in the US to demonstrate their collective powers for good. So let’s get on with this experiment!

Related links

- [Added 2 years later:] “Counterspeech DOs & DON’Ts for Students” – now an actual “tool,” a 4-frame comic, one-page comic with simple tips students can use to counter online harassment and cyberbullying. Released Sept. 28, 2017.

- Susan Benesch’s site about Kenya’s successful counterspeech experiment: Voices that Poison: Defining Inflammatory Speech and Limiting Its Effects

- Mainstream media: “Using ‘flower speech’ and new Facebook tools, Myanmar fights online hate speech,” a Washington Post piece last year on #Panzagar

- Counterspeech is an expression of citizenship, including digital citizenship (for as long as the latter is treated separately, anyway). It requires agency, the missing piece of the US’s long-standing discussion about youth digital citizenship.

- Research milestone for digital citizenship: Some of the US’s premier scholars in the youth online risk and safety field have just this year proposed a more balanced definition of digital citizenship, one that balances “online respect” (the behavioral part) with online participation or civic engagement. Counterspeech, online and offline, is clearly part of civic engagement and an opportunity to practice citizenship.

- Workshop on dangerous speech at the IGF: A video recording of an important multinational discussion on dangerous speech featuring Susan Benesch, Urs Gasser of Harvard’s Berkman Center and colleagues at the 2015 Internet Governance Forum in Brazil.

- Agency essential to counter speech: People can’t make change with counterspeech – as citizens or digital citizens – without agency. So initiatives that have the effect of removing agency in the name of protection such as Europe’s draft regulation that would require youth under 16 to get parental permission to join social media services in effect reduce youth safety online (see this).

- On the power of social norms: as a solution to social cruelty online and a sidebar that zooms in on their power

- When I wrote “Balancing external with internal Internet safety tools” in 2013, I was thinking there nothing new under the sun where external tools were concerned (parental controls, rules, policies, etc.), at least in terms of categories, and the public discussion badly needed to shift to the vital internal ones (resiliency, ethics, social literacy, media literacy, etc.), so this is exciting – a “tool” that blends internal and external safeguards.

- My 2012 post “Teens, social media & trolls: Toxic mix” and, this fall, insights on the phenomenon from someone who’s been severely targeted – not that different from Benesch’s observations about dangerous speech

- Toward defining “bullying,” “cyberbullying” and “harassment”

Leave a Reply